Harmony: A Design System Built for Machines

AI-Ready Design Infrastructure at Arcos

3 days

Down from 2-week investigation sprint12 hours

Down from ~40 hours for a wired Figma prototype~30% faster

Across piloted delivery cycles2 pods, 3 senior designers

The moment it clicked

The thing about BetterCloud Fulcrum is that it was good. The component library was well-structured, the Figma file was clean, the design intent was documented. What it became, in practice, was the best prototyping library we’d ever had. Design sprints moved faster. High-fidelity concepts that used to take days took hours. For design, it was genuinely transformative.

For engineering, it never existed. Not because they rejected it — there was no rejection, no debate, no principled objection. There was just no capacity to investigate it, plan a pilot, build an implementation strategy. Every team was already mid-sprint, already negotiating what was getting cut this cycle. BetterCloud Fulcrum never crossed the boundary into engineering not because it wasn’t ready but because the organization never had a quiet moment to receive it. What I learned: a design system that lives entirely on the design side of the boundary is a very powerful prototyping tool. It is not, yet, infrastructure.

TVH Uplift had made it across the design-to-engineering boundary, which is what made its situation more instructive. Engineering teams were using it — inconsistently, without full confidence, in pockets rather than as standard practice. The Figma library and the codebase had drifted enough that developers weren’t sure whether following the system would introduce more problems than it solved. Some teams trusted it. Others built around it. The system existed in a state of partial adoption that was, in its own way, more telling than no adoption at all: it showed exactly where trust breaks down when a design system doesn’t have protected organizational ownership. Moving design system engineers under UX, co-designing governance with an engineering advocate, and giving the system genuine capacity is what stabilized it and earned it broader use. Uplift taught me that a design system needs the right home in the organization before it can fulfill what it was built to do.

Both of those experiences were building something more like calibration than cautionary tales. By the time I got to Arcos, I understood two things clearly: a system that doesn’t cross into engineering isn’t infrastructure yet, and a system without protected ownership can’t hold its own trust. Harmony starts from both of those as solved premises. But there was a third problem neither system had ever had to answer, because it didn’t fully exist yet. The moment AI entered the delivery pipeline, the gap between what humans could read in a design system and what machines could actually execute against it stopped being theoretical. That’s the gap Harmony was built to close.

The diagnosis

AI is very good at moving fast and very bad at knowing what it doesn’t know, which causes a heavy problem in the design-to-dev pipeline that isn’t plumbed for it. Feed a model a Figma spec and it will produce code. Confidently. With the same small judgment calls a developer would make, except a developer can ask a question and a model will just pick. The handoff problem didn’t go away when AI showed up. It accelerated.

At the same time, agentic coding was emerging fast enough that the question stopped being theoretical. Cursor. Copilot. Claude Code. Figma Dev Mode with AI assist. Every one of these tools is only as good as the context it can access, and the context most design systems were built to provide was designed for a human reader who could infer, interpret, and ask for clarification. A token named color-feedback-warning tells a developer something. It tells a model a label. A component documented with props and variants tells an engineer how to use it. It tells an agent the syntax. What neither of those provides is the thing that actually determines whether the output is right: the why. Why this token and not the similar one two rows up. Why this component and not the raw library element underneath it. Why this layout serves the user’s actual goal in this specific moment.

Vibe coding accelerated the urgency further. As more teams started reaching for AI-generated UI as a starting point rather than a finishing tool, the design systems field started confronting a problem it had mostly deferred: a system that can’t communicate intent to a machine isn’t infrastructure anymore. It’s a reference document. Useful. Not load-bearing.

What had to be true for Harmony to work was that intent had to be legible at every layer of the chain. Not implied by naming conventions. Not written in a Confluence page somewhere. Encoded, structured, and delivered into the agent’s working context at the moment the agent was making decisions. Token descriptions that carry the why and the when, not just the what. Component documentation grounded in user need and goal, not just interaction states and prop tables. Feature-level user intent flowing in from the design spec, scoped to the specific thing being built right now. And underneath all of it, a set of agents that don’t just assist but enforce: catching a developer reaching for raw MUI when a Harmony component exists, validating built output against the design spec before it ships.

That’s a different kind of design system than the field has mostly been building. It’s also, given where agentic coding is going, the only kind that will still matter in two years.

The vision

The argument I needed to make at Arcos wasn’t that we should build a design system. Most organizations agree to that in principle and then never give it the protected capacity it needs. The argument was something more specific and harder to land: we needed to build a design system that treated AI agents as a primary consumer alongside humans. Not a downstream beneficiary. A first-class reader.

That sounds simple stated cleanly. It wasn’t simple to advocate for in 2025. Most of the design systems conversation was still focused on tokens and components: the shapes and values, the Figma file, the component library structure. The deeper bet I was making was that within the next two years, the question every design system would be asked is not “is it well-organized for designers” but “can an AI agent build accurately against it without supervision.” That’s a different brief. It changes what gets documented, where the documentation lives, how it’s structured, and who else has to be in the room while it’s being designed.

The case I built had three parts. The first was cost: every investigation sprint, every correction loop, every revision cycle was a tax on velocity that would only get worse as AI accelerated the noise. The second was quality: a system that lets a model guess produces code that drifts from intent in ways nobody catches until QA, or worse, until production. The third was strategic: companies that built design infrastructure for the agentic era would compound their velocity advantage every quarter; companies that didn’t would discover that “AI is making us faster” wasn’t quite the same thing as “AI is making us better.” Arcos couldn’t afford to be in the second group.

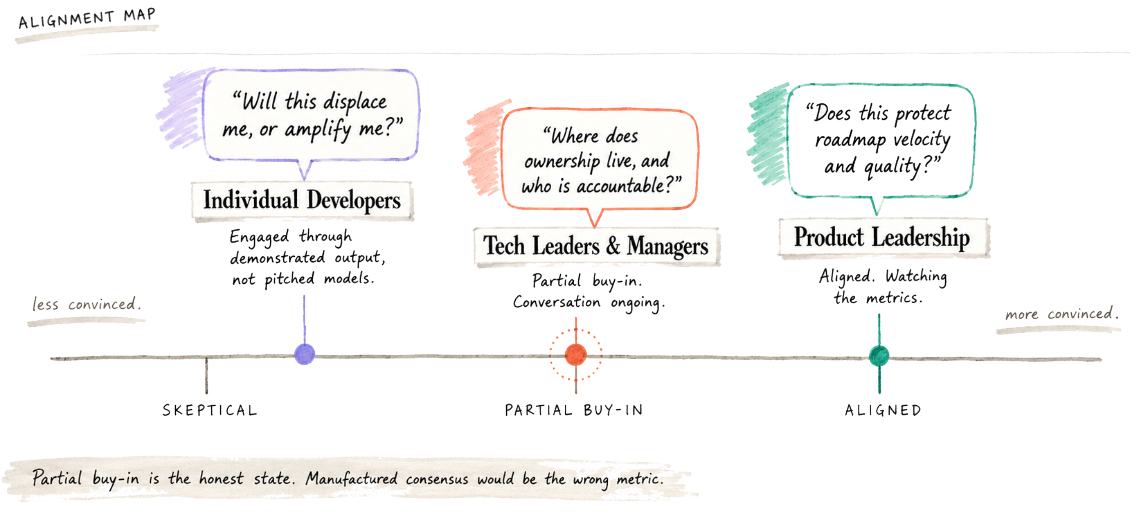

Getting that argument heard required building coalition before building anything. Individual developers came on board first. They were paying the highest cost for the existing handoff and saw the relief most clearly. Product leadership followed, because faster validated direction is something product organizations universally want when they can get it. Tech leadership was the harder room, and the conversation there is still ongoing. Their concerns about codebase ownership, accountability, and what “UX-generated code” actually meant inside an engineering org were legitimate questions that deserved careful answers, not workarounds. What I cared about more than full alignment on day one was shared direction. We agreed on the vision. We agreed on the path. We started moving together. Big ideas like this don’t land in a single meeting and they shouldn’t. What matters is whether the people in the room are negotiating in good faith toward the same outcome and whether the work is visibly making progress against the shared frame. That’s the kind of alignment I optimize for. It’s slower in the first month and dramatically faster everywhere after that.

The approach

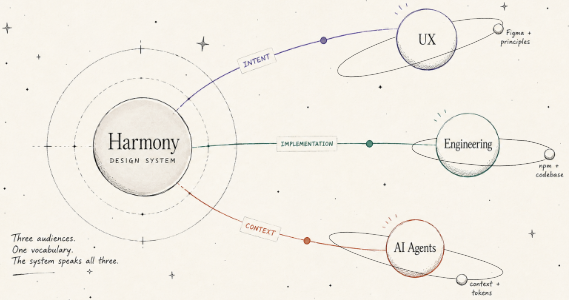

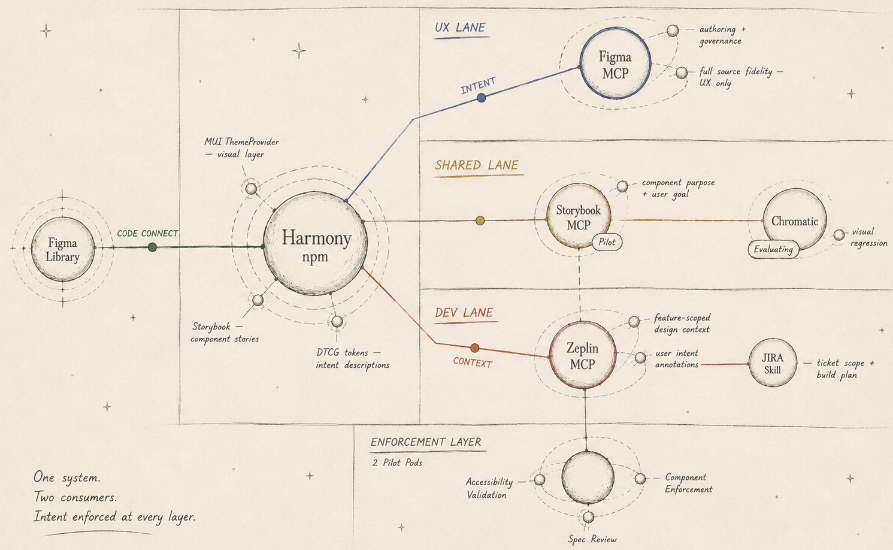

The architecture had to do something unusual: communicate the same design intent at three different levels of abstraction, to three different consumers, in three different formats. Tokens needed to carry their own meaning at the value level. Components needed to carry their own meaning at the system level. Features needed to carry their own meaning at the user-goal level. And all three layers needed to be machine-readable in a way that flowed naturally into the agent’s working context — not requested, not retrieved, just present.

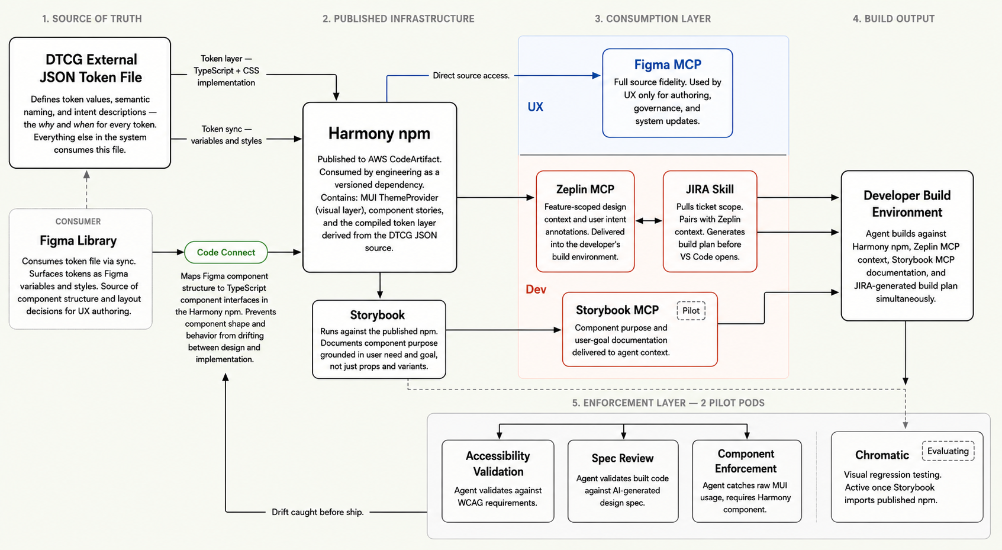

The chain we built starts with the tokens. Harmony uses DTCG format because the standard is open, the tooling is mature, and the description field is structured. Every token carries a written description of why the value exists and when to use it versus a similar token. The names are still semantic, which solves the human readability problem, but the descriptions carry the layer of meaning a name can’t, the layer that determines whether a model picks the right token for the right reason instead of the closest syntactic match. From the tokens, the system builds upward: components in the Harmony npm package, published to private AWS CodeArtifact, consumed by engineering as a versioned dependency. Figma library components mapped to their TypeScript implementations through Code Connect, so the source of truth and the implementation can’t drift apart silently. Storybook running against the published npm, documenting component purpose grounded in user need rather than just interaction states.

The architecture you see now isn’t the one I first drafted. My initial approach was to consume MUI components, override each one with a theme provider, and export the new component — so engineering would import Button from Lighthouse-web instead of from MUI, and UX would own the entire visual layer end to end. The argument for it was clean: total UX control over the rendered component. The argument against it became clear as soon as I worked through the implications. Owning components meant owning MUI version updates, owning bug fixes that originated in MUI, owning every regression that surfaced when MUI released a breaking change. UX didn’t have the engineering capacity for that. We don’t have dedicated React developers the way the design system org at TVH did, and committing to it would have created a dependency UX couldn’t honor.

So I pivoted. Instead of consuming and overriding components, Harmony provides themes — detailed token sets and implementations delivered through MUI’s ThemeProvider, layered on top of unmodified MUI components. UX controls the visual layer through tokens and theme configuration. MUI handles the component layer. The architectures stay cleanly separated, and the system has a safety property that the original approach didn’t: if Harmony fails to load for any reason, MUI falls back to its defaults and the software still works. Nothing catastrophic depends on UX-owned infrastructure. That separation of concerns is what made the system safe enough to ship to production in pilot, and it’s what made tech leadership’s concerns about codebase ownership easier to address, because UX wasn’t claiming ownership of anything we couldn’t sustainably own.

The agent layer is where the architecture closes. Two MCP servers, each scoped to a different consumer. Designers work in Figma MCP, with full source fidelity, used for authoring, governance, and system updates. Developers work exclusively in Zeplin MCP, which delivers feature-scoped design context and user intent annotations directly into the build environment. The split is deliberate. Designers need access to the source. Developers need the right information for the build in front of them, scoped to the feature, at the moment of implementation. Storybook is also MCP-enabled in pilot, so component purpose and user-goal documentation flow into the agent’s context alongside the feature spec. The kickoff itself is automated: an AI skill pulls the JIRA ticket that defines the feature, pairs it with the relevant Zeplin design context, and generates a build plan before a human opens VS Code.

Underneath all of that runs a suite of AI agents and skills that don’t just assist but enforce. UX uses agents for design system governance and updates. Two development pods are piloting agents that run accessibility validation, review code against the AI-generated design spec, and catch developers reaching for raw MUI components when a Harmony equivalent exists. The system isn’t asking developers to follow it. It’s making the wrong path visible at the moment they take it. That’s the difference between a design system that works through persuasion and one that works through architecture. Harmony was built to be the second kind.

Building credibility

The pilot couldn’t run on my work alone. Three senior designers had to be genuinely capable inside the delivery model — not watching me demonstrate it, not rubber-stamping artifacts I produced, but authoring inside the same toolchain with the same fluency. Two weeks of training and environment setup got them into VS Code with Claude Code CLI. That’s a real ask for experienced designers who’d never opened a terminal, and they showed up for it. One senior designer went deeper, partnering with me on the token architecture and helping establish the external JSON file as the canonical source of truth for Harmony’s token layer. The model holds because the team can run it without me, not because I’m the only one who can.

Engineering credibility came from the work being legible to engineers on its own terms. When the first higher order components (HOC) shipped from UX into the Lighthouse codebase, the question wasn’t whether they looked right. It was whether they’d be reviewable, trustable, and mergeable by engineers who hadn’t built them. They were. The HOCs lived in the same repository as the production code, used the same vocabulary engineering already used, and arrived through the same dependency channels as any other internal package. None of this asked engineering to take anything on faith. The artifacts spoke for themselves.

The sharpest credibility moment came from somewhere I hadn’t planned. The mobile pod, which wasn’t part of the original delivery model rollout, connected the Zeplin MCP to their own Claude Code workflow during a planning session, using it to visualize shaping outcomes mid-discussion. They also built a UX Designer agent trained on our team’s documented ways of working, using it as a behavioral frame for a model that now operates inside their delivery workflow. They built it themselves, because the documentation was precise enough to make it possible. That moment told me something the pilot metrics couldn’t: when your team’s institutional knowledge is structured well enough to be machine-legible, it doesn’t just scale across the people you trained. It scales across surfaces you never anticipated.

What shipped

The honest inventory matters here, because the case isn’t “we built a complete system in nine months.” The case is that we built it in the right order, and each piece unlocked the next. Some of the chain is in production. Some is launched and instrumenting. Some is architecture-enabled and being evaluated. All of it was sequenced deliberately.

In production. The Zeplin MCP is active across planning and initial build, delivering feature-scoped design context and user intent annotations into the agent’s working environment at the moment developers need them. The AI skill that orchestrates Zeplin and JIRA at kickoff is generating build plans against tickets before a human opens VS Code. What used to be a two-week investigation sprint is now an artifact produced in minutes. Storybook is live with tokens and core components, importing the actual Harmony npm including the MUI dependency and the Harmony theme. UX is running AI agents and skills for design system governance and updates as part of normal operating practice.

Launched and instrumenting. The Harmony npm package is published to AWS CodeArtifact and installed as a dependency in the Lighthouse Web pilot, where existing production code is being refactored against the new token and theme layer. Figma Code Connect just shipped, built using Figma MCP and Claude Code CLI, connecting every Figma library component to its TypeScript implementation in the npm. The pilot is the validation mechanism, not a delay. We’re learning how the model performs against real delivery cycles before scaling it.

Architecture-enabled, evaluating. The Storybook MCP is in pilot with two Lighthouse Web pods, who are assessing accuracy and trustability against their actual feature work. A subset of the agent suite has been deployed in those same two pods for accessibility testing, code review against the AI-generated design spec, and component enforcement: catching developers reaching for raw MUI when a Harmony component exists. Chromatic visual regression is under evaluation by QA now that Storybook is live with the npm and the regression target is real.

The pattern is intentional: foundation first, then chain, then enforcement. Storybook MCP couldn’t pilot until Storybook was live with the npm. Code Connect couldn’t ship until the npm existed. Chromatic only becomes meaningful once Storybook imports the actual Harmony dependency. Every layer needed the layer underneath it to be solid before the next one could be tested honestly. What’s in pilot is in pilot because that’s where the work is right now, not because it stalled.

The larger argument

The thing I most want you to take from this case is that design systems are no longer documentation projects. They’re infrastructure for a build environment in which AI agents are first-class participants. The systems that treat agents as primary consumers will compound velocity and quality every quarter. The systems that don’t will discover that AI’s speed advantage was never the same thing as a quality advantage. Harmony is the design systems equivalent of a compiler. Tokens and components define a constrained execution environment. Intent descriptions, MCP-enabled documentation, and enforcement agents make accurate output the path of least resistance. The system doesn’t ask developers to follow it. It makes the wrong path visible at the moment they take it.

Where this architecture goes next is in three directions, each one building on what’s already in place. The intent layer wants to go deeper. Code Connect just shipped, and the richer version of this architecture has every component carrying an explicit machine-readable contract that an agent can reason about, not just match against. The enforcement layer wants to scale beyond the two pilot pods, with the right tuning for different team contexts, and to evolve from validation toward something closer to active collaboration with the developer during the build itself. And the pattern itself wants to become portable. What we built at Arcos for one product is the same architecture that scales to multi-brand and multi-platform systems, and the next maturity step is making it transferable rather than bespoke. The companies that get this layer right in the next two years are going to compound advantages that everyone else spends a decade trying to catch.

Team

- 3 Senior Designers

- 2 Pilot Development Pods

- 1 Engineering Advocate